AsianScientist (Sep. 15, 2021) – Whether rain or shine, day or night, computers can now ‘see’ better with novel algorithms that sharpen the quality of images and videos for enhanced analytics applications. The international team presented their research at the annual Conference on Computer Vision and Pattern Recognition (CVPR) last June 21-24, 2021.

By processing imaging and video data, computer vision technologies are advancing exciting innovations like automatic surveillance systems and self-driving cars. But just as how humans have difficulties seeing in the dark, there’s not much a computer can do in the event of low-quality visual inputs, such as videos blurred by streaks of rain.

While people use editing tools to correct these images and videos up to a certain extent, computer vision applications need built-in and more powerful photo enhancement techniques to function without a hitch. Night-time scenes, for example, pose a challenge for state-of-the-art methods, as dialing up on brightness doesn’t fix issues like glares from streetlights.

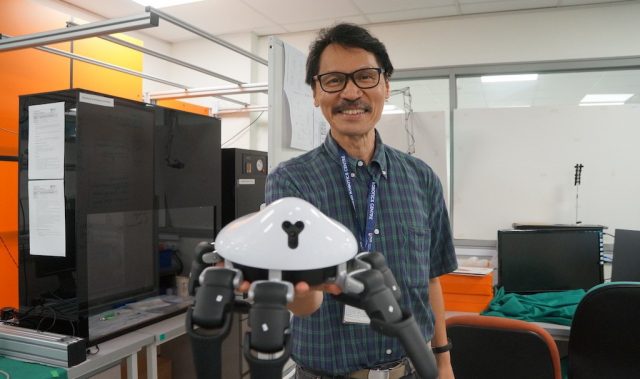

In two separate studies, researchers led by Dr. Robby Tan, Associate Professor at the National University of Singapore (NUS) and Yale-NUS College, developed algorithms called neural networks to enhance videos tainted by poor lighting and rain. These neural networks imitate how the human brain processes data to recognize patterns and solve problems, representing a powerful advancement in artificial intelligence.

To enhance night-time videos, the team used separate networks for removing data noise and addressing light effects. By having individual networks dedicated to resolving different issues, the method simultaneously increased the brightness of dim scenes while suppressing glares to render the final, clear output.

Shifting from darkness to droplets, Tan and colleagues devised another neural network-based method to counteract the effects of rain in videos, using frame alignment to remove streaks that appear in random directions in different frames. By estimating the orientation and movement of the camera that captured the video, the algorithm warps each frame to its surrounding frames, ensuring smooth and consistent removal of rain.

Moreover, modeling these movements allowed the system to edit out water droplets, which accumulate and appear like a dense veil. Like pulling apart the underlying objects from the upper layer of rain, the network estimated the depth of the image to remove the rain veil, outperforming existing methods that resolve rain streaks alone.

Through their visibility enhancement algorithms, the team looks to optimize computer vision systems further, helping usher in automated technologies that can perform well in all sorts of environmental conditions.

“We strive to contribute to advancements in the field of computer vision, as they are critical to many applications that can affect our daily lives, such as enabling self-driving cars to work better in adverse weather conditions,” concluded Tan.

The articles can be found at: Sharma & Tan (2021) Nighttime Visibility Enhancement by Increasing the Dynamic Range and Suppression of Light Effects.

Yan et al. (2021) Self-Aligned Video Deraining with Transmission-Depth Consistency.

———

Source: Yale-NUS College; Photo: Filip Mroz/Unsplash.

Disclaimer: This article does not necessarily reflect the views of AsianScientist or its staff.