AsianScientist (Jan. 3, 2017) – Think about the smartphone in your hand and compare it to the first iPhones that were launched in 2007. In the decade that has passed, the speed of your phone’s central processing unit (CPU) has quadrupled, its storage has increased 16-fold, and it now boasts 20-fold more memory—all without costing very much more.

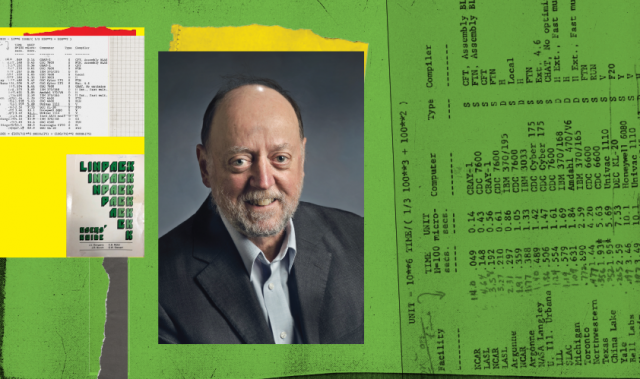

One man predicted this would happen. In 1972, Professor Gordon Bell formulated what is now known as Bell’s Law, describing the formation, evolution and death of different types of computing systems. The Law predicted that computer classes would evolve along one of three paths depending on their price: an established class with a constant price but continually improving performance; a supercomputer class which would get progressively more expensive in the race to be the fastest; and finally, a cheaper ‘minimal’ class that would open up new markets.

More than 40 years after it was first proposed, Bell’s Law continues to hold, with smartphones as an example of an established class that conforms to the Law.

“I believe it will persist for many decades to come, with different materials and transducers,” Bell told Supercomputing Asia.

Hands-on experience and historical evolution

Computers were still in their infancy when Bell graduated with a degree in electrical engineering from MIT in 1957, but what he saw had him hooked. While in Australia on a Fulbright scholarship, he had the opportunity to work on English Electric DEUCE, a machine that Alan Turing had been involved with during his time at the National Physical Laboratory.

Back at MIT for his PhD studies, Bell worked on TX-0, one of the first computers to use transistors instead of the prevailing vacuum tube technology. It was then that he met fellow engineers Ken Olsen and Harlan Anderson, who persuaded Bell to join them in their newly formed company to work on a new prototype that they called Program Data Processor (PDP) minicomputers.

“What fascinated me was the incredible versatility to do everything including calculating, storing, communicating, controlling, etc.,” he enthused.

As the lead architect of the system, Bell helped the PDP series become one of the most popular minicomputers of all time, with the PDP-11 selling over 600,000 units.

That achievement was surpassed during his tenure as vice president of engineering at Digital Equipment Corporation (DEC), where he spearheaded the Virtual Address Extension (VAX) line of microcomputers that catapulted DEC to the position of second largest company in the industry.

In between developing those two blockbusters, Bell taught computer science at Carnegie Mellon University from 1966 to 1972. While working on a book with his mentor Allen Newell, he became interested in the classification and evolution of different classes of computers. Coupled with his hands-on experience in developing successful microcomputers, Bell’s academic interest in the historical evolution of the first computing systems culminated in the formulation of Bell’s Law.

Of policy and prizes

Bell’s career took another turn in 1983, when a heart attack prompted his resignation from DEC. Far from slowing down, however, he turned his attention to computing at the national and international level, becoming the founding assistant director of the National Science Foundation’s directorate for Computer & Information Science & Engineering (CISE) in 1986 and launching the ACM Gordon Bell Prize the following year.

“I funded the prize to reward and acknowledge those people who program these highly parallel computers,” Bell added. “The first prize really got the community interested in exploiting parallelism.”

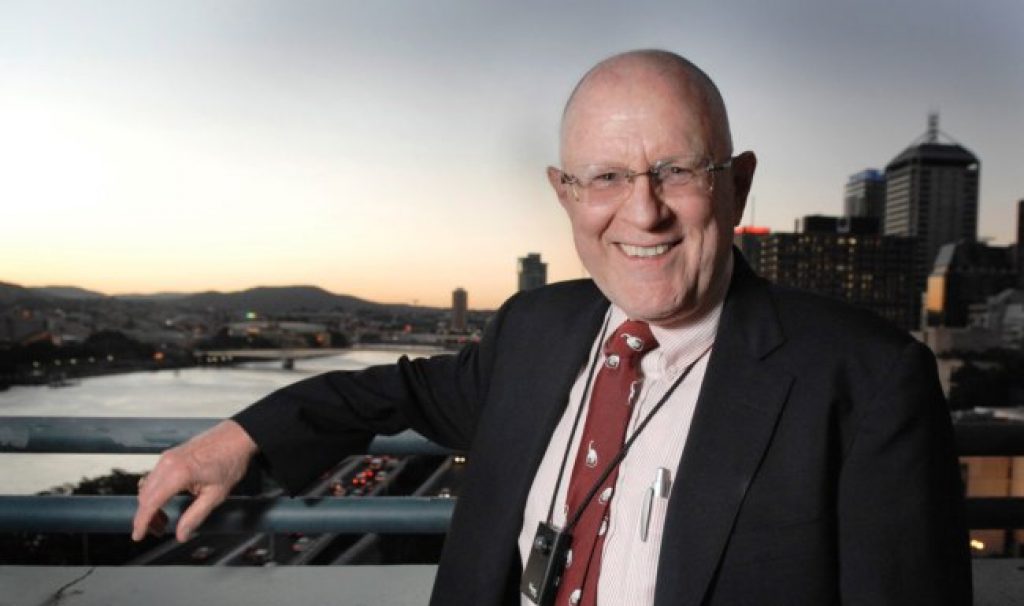

More than the US$10,000 cash reward, the Gordon Bell Prize is highly coveted for the prestige that it confers on the recipient, being akin to the Nobel Prize of supercomputing. The inaugural prize in 1988 went to Dr. John Gustafson, now with the Agency for Science, Technology and Research, Singapore.

Bell himself is no stranger to accolades, having won the National Medal of Technology in 1991 and the inaugural IEEE John von Neumann Medal in 1992, among others.

The act of memory

Now in the third act of his career, the 82-year-old Bell continues to investigate the possibilities afforded by computers. In particular, he is interested in the possibility of using computers as an aid to human memory.

“I got the idea when [artificial intelligence expert] Raj Reddy wanted to scan the books I had written as his project to preserve the 20th century. I had boxes of all the papers, books, CDs, photos and videotapes that we were trying to document,” Bell shared.

That project evolved into MyLifeBits, a real-time lifelogging experiment that attempted to capture every bit of information that Bell generated between 1998 and 2007. Microsoft, where Bell worked full time from 1995 till his retirement in 2012, has since developed software that annotates all that data, making it easy to sort and retrieve.

Though lifelogging is no longer popular, wearable technology and the Internet of Things (IoT) could spark a revival.

“I think we will see IoT-based low power wireless devices integrated to form single chips for connecting to everything in the future. The next decade will probably be more deployment followed by all kinds of efforts to understand and exploit all the data,” Bell said.

But at the end of the day, Bell does not see himself as a futurist. Of all the different hats he has worn over the course of his six decade long career, one remains closest to his heart.

“The early days when I actually did designs were perhaps the fondest memories,” he said. “I would like to be remembered as an engineer who worked, by example, to support the creation of more engineers and engineering thinking.”

This article was first published in the print version of Supercomputing Asia, January 2017.

———

Copyright: Asian Scientist Magazine.

Disclaimer: This article does not necessarily reflect the views of AsianScientist or its staff.