AsianScientist (Jan. 3, 2017) – If supercomputers were cars, Tsubame-KFC at the Tokyo Institute of Technology would be a Tesla Model S—powerful but green. When it was unveiled in 2013, Tsubame-KFC promptly swept up first place on both the Green500 and Green Graph500 lists, convincingly demonstrating that it is possible to be mean, lean and clean. But just how its team achieved this feat might come as a surprise: they dipped their entire supercomputer in a vat of oil.

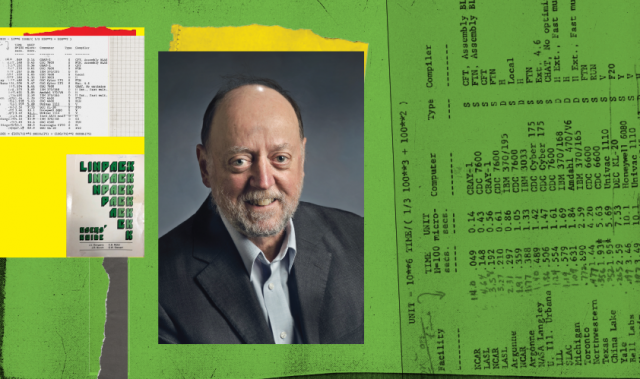

In this interview, Professor Satoshi Matsuoka, the key architect of the Tsubame projects, shares with Supercomputing Asia their seemingly unconventional approach to going green and the role that energy efficient supercompters will play in the race to exascale.

Why is green supercomputing important?

Satoshi Matsuoka: The power budget has now become one of the most limiting constraints in high performance computing (HPC) and other large-scale information technology (IT) infrastructures.

In these days of massive parallel and distributed computing, machine performance is dictated by the size of the machine, which is proportional to the demand for energy.

Given that large machines are hitting megawatt-scale and beyond—equivalent to the electricity consumption of hundreds to thousands of households—increasing performance would be very difficult unless we reduce power consumption.

What makes Tsubame-KFC so energy efficient?

SM: KFC’s efficiency comes from various technological elements. One is the aggressive adoption of many-core technologies in the form of densely packed graphics processing units (GPUs).

Another is intricate power measurements and proactive control of the voltage and frequency of the GPUs to achieve maximum efficiency. One other major element is oil immersive cooling, by which we significantly reduce the power required for cooling, due to superior thermal conductivity of fluid versus air. [Oil has a specific heat capacity 1,200 times higher than air.] Oil immersive cooling further eliminates the need to power expensive fans, both in the chassis and the rack.

Also, although the oil may be at 40 degrees Celsius or more, all the components—especially the GPUs and central processing units (CPUs)—run at much cooler temperatures than air-cooled systems, again due to the massive difference in thermal conductivity. This reduces the leakage current.

Finally, since the temperature of oil can be higher than that of ambient air, it can be cooled in ambient air, rather than by using power-expensive heat pumps. Overall, both the machine itself and the cooling system are very efficient.

In which areas does Japan lead the supercomputing world? What are its comparative weaknesses?

SM: Japan’s strength is that it has been involved with supercomputers for a long time and has experience with developing talent. We also have two vendors that are developing their own CPUs for HPC, namely Fujitsu and NEC. This gives us the capability to build the entire system from ground up, for both hardware and software. Also, there are many home-grown applications.

The weakness is that we have relied on home-grown technologies for too long. The IT system development is much more horizontal, assimilating technologies from everywhere, as demonstrated in other IT sectors such as smartphones. Also, the CPUs made by Fujitsu and NEC are not sold separately but only as a complete system, unlike those made by Intel or NVIDIA.

Will Japan be the first to develop an exascale supercomputer? What are the key challenges standing in its way?

SM: I cannot comment on this publicly due to my non-disclosure agreement with the [Japanese] government regarding the Flagship 2020 “Post-K” project. What I can say is that in any exascale development the principal problem will be power efficiency, in addition to many other obstacles such as reliability, scaling, programming, I/O (input/output), etc.

Why has Japan made exascale computing a priority?

SM: There are many applications that will benefit from exascale. For Post-K, there are nine strategic application areas and four emerging areas. They cover everything from medical and pharma, environmental and seismic, climate and weather, manufacturing, advanced materials, brain simulations, combustion, as well as fundamental sciences such as the simulations of dark matter.

What technical advances in supercomputing do you anticipate in the next ten years?

SM: In the short term, I believe there will be a significant push for artificial intelligence (AI) and Big Data to become one of the dominant themes in HPC, steering the improvement of the entire ecosystem.

In the longer run we are approaching the end of the so-called Moore’s law, around 2025 at the earliest. At that point, semiconductor lithography will no longer shrink, making transistor density and power constant over time, which means that FLOPS will cease to increase. This limit will be the most challenging problem facing all IT, not just HPC.

My belief is that the alternative strategy to increase performance beyond the end of Moore’s law will be the relative increase in the performance of data-related parameters such as memory capacity and bandwidth, as well as the interconnect bandwidth; in other words, improving performance as measured by BYTES.

Along with the customization of processing to save transistors depending on the type of data, I see a transition of computing from FLOPS to BYTES. Of course, this entails a complete change in the use of devices, hardware architectures, software stack, programming, as well as algorithms. It will be a ten-year or longer challenge to devise a new paradigm for computing systems, a challenge that has to start now in basic research.

This article was first published in the print version of Supercomputing Asia, January 2017.

———

Copyright: Asian Scientist Magazine.

Disclaimer: This article does not necessarily reflect the views of AsianScientist or its staff.