AsianScientist (Feb. 13, 2019) – When the first biological eye appeared some 600 million years ago, the rules of survival were irrevocably changed. By being able to detect light and respond to it, organisms that could see were better at seeking out food and avoiding predators.“In the land of the blind, the one-eyed man is king,” wrote the 15th century Dutch scholar Erasmus. He was many millennia late in making that observation.

While the sense of sight used to be the domain of living things, humans are now seeking ways to endow our machines with vision. This goes beyond creating lenses for cameras and storage devices for images or videos. What scientists want to do is allow machines to recognize visual information and make sense of it, ideally in real-time. ‘Computer vision’ is the official term for this technology, and practical applications of it are already emerging. Among the frontrunners in developing and deploying computer vision is Chinese artificial intelligence (AI) company SenseTime.

Founded in October 2014 by Professor Tang Xiaoou of the Chinese University of Hong Kong (CUHK), SenseTime has risen meteorically to become the world’s biggest AI startup unicorn, having raised a total of US$1.6 billion in funding over the last four years. Counted among its backers are China’s e-commerce giant Alibaba and Singapore’s state investment firm Temasek Holdings, which see tremendous potential in SenseTime’s core technologies.

“SenseTime is the first to introduce deep learning into the area of computer vision and the first to develop a facial recognition system that surpasses human performance,” said Assistant Professor Lin Dahua, director of the CUHK-SenseTime Joint Lab and a co-founder of SenseTime, in an interview with Supercomputing Asia. “Our offerings are built on more than 20 years of in-house research and development, and we now have more than 700 partners and customers in China and around the world using our deep learning platform.”

A look beneath the surface

By deep learning, Lin is referring to the “tens to hundreds of layers of computation” that allow a machine to recognize an image. In essence, the coded instructions—known as algorithms—tell the computer how to pick out features or patterns in pictures and classify them automatically.

For example, one layer could be detecting the edges of an object in an image, while another could be identifying color or shape. By combining these multiple layers of instructions to create an artificial neural network—so called because it mimics the way the human brain processes information—a machine is able to infer the content of an image, even to the point of captioning or describing it.

But before a computer can make inferences accurately, it needs to be trained, said Lin. Just as a child might learn through reading more books or repeating a series of actions, an artificial neural network is trained with data that is funnelled through its layers repeatedly. With each pass, the set of algorithms automatically adjusts its parameters until it eventually becomes very good at its assigned task. This is known as offline learning.

“In the case of facial recognition, you have to run the training procedure over millions of faces, with tens of thousands of iterations, and this is really computationally intensive. Hence, supercomputers play a foundational role in the development of AI-enabled systems,” Lin explained.

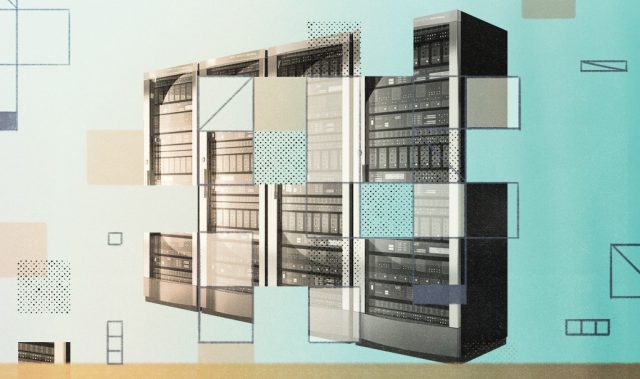

Supercomputers for sight

As a pioneer of deep learning in computer vision, SenseTime naturally has high-performance computing resources at its disposal. With a supercomputing platform comprising more than 6,000 high-performance graphics processing units (GPUs) and a deep learning large-scale training system that allows the simultaneous training of hundreds of GPUs, SenseTime has no trouble crunching huge datasets to optimize its artificial neural networks and achieve accurate facial recognition.

“Typically, the range of computing power we operate with lies between several teraFLOPS to several hundred teraFLOPS,” Lin noted.

AI training is thus beyond the capacities of the average personal computer.

“But once you have optimized the artificial neural network for facial recognition, it can be run in real-time even on a mobile phone.”

This is possible because all an optimized algorithm needs to do is convert the image of a face into a string of several hundred numbers (known as a vector), then compare it against a database where every person is already encoded as a vector.

“This can be done very efficiently, even for millions of people,” said Lin.

Equipped with these capabilities, SenseTime has already made inroads into several industries, Lin added. The Guangzhou Public Security Bureau currently uses SenseTime’s intelligent surveillance system to facilitate criminal investigations; according to the company, since 2017, more than 2,000 suspects have been identified and close to 100 cases solved using its technology.

On a lighter note, microblogging website Weibo also relies on SenseTime’s facial recognition methods to enhance its user experience and widen its reach. Lin emphasized that “experience working with different vertical sectors is an important aspect that sets SenseTime apart from many competitors.”

More than meets the eye

But there are areas where the technology still falls short. For instance, under poor lighting, or when a person is “uncooperative” and not looking directly at the camera, the facial recognition system may be less effective.

“Also, when we try to scale up this recognition system to millions of people in a large city, there are bound to be people who may resemble each other, and this makes unique identification tricky. Coupled with the manually intensive process of annotating training datasets, there are still challenges that we have to tackle to take facial recognition to the next level,” said Lin.

This is where collaborations with academia play an important role, he commented, hence his appointment as the director of the CUHK-SenseTime Joint Lab. One project that Lin works on is the development of a deep learning framework to identify an individual in a video feed based on just a single portrait image. Rather than consider only visual data, the algorithm factors in temporal information so that a person of interest can be recognized even in environments that are different from where the portrait image was originally taken.

“A lot of research problems require a longer term to explore truly innovative methodologies, and some of that work may be done in the universities,” Lin noted, adding that SenseTime’s academic collaborations extend beyond China’s borders.

In February 2018, SenseTime partnered with the Massachusetts Institute of Technology, US, to leverage the latter’s strengths in AI research. Shortly after, it also signed memoranda of understanding with three Singapore-based organizations—the National Supercomputing Centre Singapore, Nanyang Technological University and telecommunications provider Singtel—as part of its international expansion plans.

Having helped computers open their eyes, SenseTime looks set to take the technology to the masses. Like the first animals with sight, machines may have a very different vision for the future, and it remains to be seen where humans fit into that picture.

This article was first published in the print version of Supercomputing Asia, January 2019.

Click here to subscribe to Asian Scientist Magazine in print.

———

Copyright: Asian Scientist Magazine; Photo: Lam Oi Keat.

Disclaimer: This article does not necessarily reflect the views of AsianScientist or its staff.