AsianScientist (Feb. 13, 2019) – Since 1965, Moore’s law has served as a roadmap for the chip manufacturing industry, which for decades has kept pace with the original dictum of doubling the number of transistors on a chip every one to two years. However, as we approach the physical limits of miniaturization, it is becoming increasingly clear that a new computing paradigm is needed.

For IBM, getting to ‘more than Moore’ is likely to involve quantum computers.

“We would like to achieve quantum advantage, which refers to the point where quantum computers not only speed up whatever the current classical computers can do, but solve problems that are impossible to solve on classical computers,” said Dr. Christine Ouyang, distinguished engineer, IBM Q Network Technical Partnership and Systems Strategies.

“But in order to realize these breakthroughs and make quantum computing both useful and acceptable, we will need to completely re-imagine information processing and the computer that will perform information processing.”

A powerful but nascent technology

At its simplest level, a classical computer is a collection of bits that are either 0 or 1. The more bits a computer has, the greater the number of possible states it can be in. For example, a single bit has two possible states: either 0 or 1, while two bits have four possible states: 00, 01, 10 or 11.

In a similar way, each quantum bit or qubit has more than one state, analogous to 0 and 1. But unlike classical bits, which are more like a coin placed flat on a table that is either heads or tails, qubits exist in a state known as superposition, which can take on the values of 0, 1, or both at once, like a coin that is spinning and therefore simultaneously heads and tails.

Although a classical computer with two bits has four possible states, it can only be in one of those states at any one point in time. A quantum computer with the same number of qubits, correlated through the phenomenon of quantum entanglement, on the other hand, can simultaneously exist in all four possible states at the same time, making it exponentially more powerful—at least in theory.

At the moment, even the most advanced quantum systems have fewer than a hundred qubits, whereas “business problems might require hundreds, thousands or even millions of qubits,” Ouyang said. In a further departure from classical computing, simply adding more qubits is not enough to improve the power of quantum computers.

Another significant challenge standing in the way of quantum computing is how to reduce the error rates of qubits. Part of the solution involves improving the coherence time of qubits—the length of time that researchers can maintain a qubit’s quantum state.

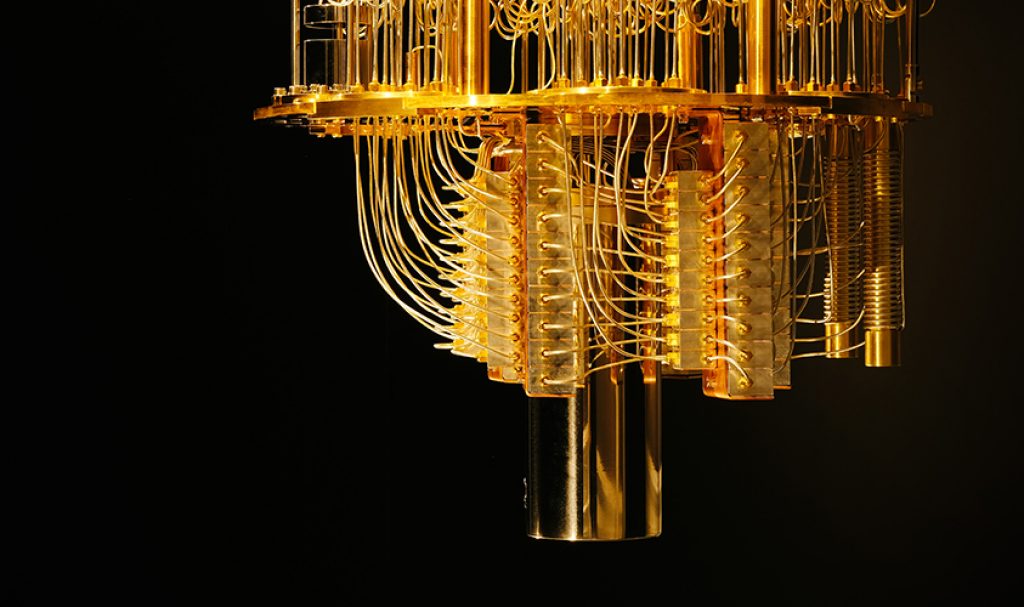

To protect them from random interference such as mechanical vibration, electromagnetic waves and temperature fluctuations, a quantum processor’s qubits are kept in a dilution refrigerator that is cooled to extremely low temperatures of 10–15 milliKelvin, about a hundred times colder than outer space. Even then, they typically last just a few microseconds—although qubits in IBM Q systems can last as long as 100 microseconds, according to Ouyang—limiting the number of calculations that can be performed.

“If you look at overall system performance, it is actually a very complex metric to assess how powerful a quantum computer is,” Ouyang said. “Coherence time is not the only factor; controllability and scalability are all challenging technical problems that need to be solved.”

Beyond hardware hurdles

Aside from the considerable hardware challenges, quantum computing requires an entirely new software stack, starting from the interactions with the actual device at the assembly language level, all the way up to the operating system, midware, applications, and eventually moving to the cloud.

“In addition, we also have to develop a very efficient way to map data onto quantum computers and new algorithms that can deliver quantum speed-up for practical applications,” Ouyang added.

But the rate-limiting factor might not turn out to be the technology. The soft side of quantum computing matters as well, Ouyang said.

“If you think about the cloud or artificial intelligence, adopting these emerging technologies requires organizations to have a certain culture and a set of skills,” she said. “It usually takes decades for enterprises to fully embrace new technology.”

Recognizing the non-intuitiveness of programming for quantum computers and the need for cultural change, IBM has since 2016 made quantum computing available for free through its cloud-based IBM Q Experience. Over the past two years, IBM’s five-qubit and 16-qubit systems have been used by over 100,000 people, including not only scientists and developers but also students.

“Collectively, these people have run more than 6.5 million experiments and published more than 130 research papers. In December 2017, we launched the IBM Q Network and have since grown it into a healthy ecosystem of Fortune 500 companies, start-ups, universities and national research labs,” Ouyang said.

“All this tells you is that although it is still in an early stage, quantum computers are here today. It’s not science fiction anymore—it is a reality.”

The road ahead

Do the advancements in quantum computing then spell the end of traditional high-performance computing? For Ouyang, the answer is a categorical no.

First of all, not all problems would benefit from quantum computing.

“At least currently, we don’t think quantum computers would be good at solving big data problems because they can only take a small number of inputs but explore a large number of permutations simultaneously,” Ouyang explained.

“What we have at IBM is a hybrid computing approach. We are building an integrated system that would allow classical computers to tap into quantum computers to solve specific problems.”

The ultimate aim, however, is to overcome the aforementioned technical challenges and build what is known as a fault tolerant universal quantum computer, which would provide quadratic or even exponential performance enhancements and achieve true quantum advantage. That lofty goal is likely to take several more decades, Ouyang said.

“But before we get there, we should be able to solve some problems considered unsolvable by classical computers with our current approximate universal computer,” she added, pointing to early applications in computational chemistry, financial risk analysis, optimization and quantum machine learning.

In fact, some of the first practical use cases could well come from Asia, where institutions and companies have been working closely with IBM Q researchers. Japan’s Keio University, for example, is home to IBM’s first commercial hub in Asia, and has helped to bring together partners such as chemical company JSR, Mitsubishi UFJ Financial Group, Mizuho Financial Group and Mitsubishi Chemical to develop quantum applications for business.

“We are working with many industry leaders and universities in the region to apply quantum computing technology, as well as build skills, and at the same time build a market for quantum,” Ouyang said.

Quantum computers for a quantum world

Underlying this drive to take quantum computers mainstream is the fact that nature itself is quantum and best described by a quantum system. As Nobel laureate Richard Feynman famously declared in 1981:

“Nature isn’t classical, dammit, and if you want to make a simulation of nature, you’d better make it quantum mechanical.”

For example, although today’s massively parallel computers are already able to run complex chemistry simulations, in reality there are several assumptions and simplifications that have been built into the models.

In pursuit of a quantum picture of nature, IBM Q researchers have used their quantum computing system to simulate beryllium hydride—a relatively small molecule, but the largest one simulated by a quantum computer to date. Their research paves the way for exact representations of larger molecules, a topic of great interest to pharmaceutical companies developing small molecule drugs and researchers searching for exotic new materials.

“The completely new paradigm of quantum computing could someday provide breakthroughs in many other disciplines too, such as optimization of very complex systems and artificial intelligence,” Ouyang said. “Our immediate goal is to solve the technical challenges and at the same time work with partners to develop the first wave of applications.”

This article was first published in the print version of Supercomputing Asia, January 2019.

Click here to subscribe to Asian Scientist Magazine in print.

———

Copyright: Asian Scientist Magazine; Photo: Connie Zhou/IBM Research.

Disclaimer: This article does not necessarily reflect the views of AsianScientist or its staff.