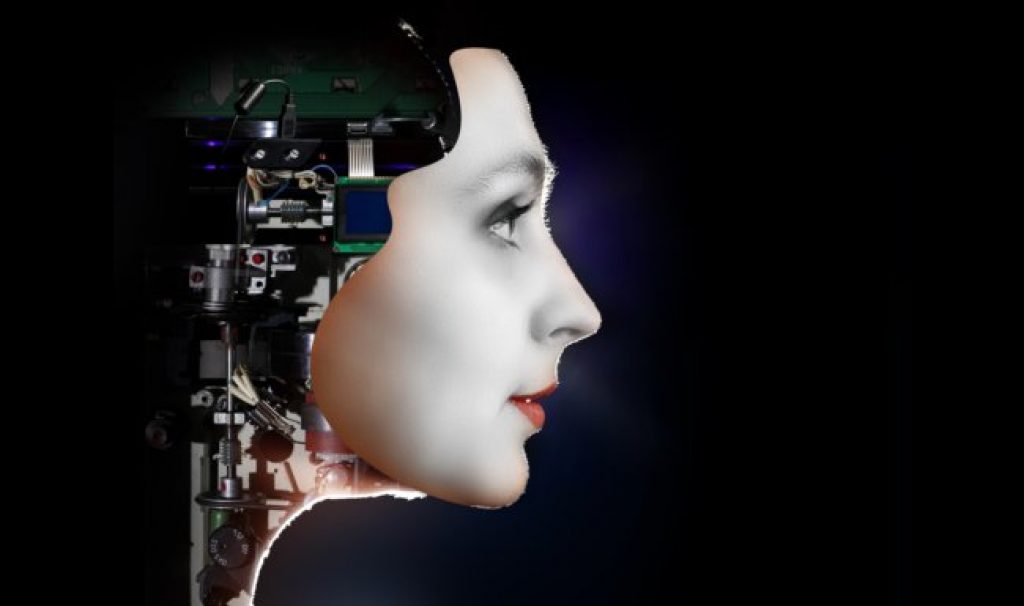

AsianScientist (Jan. 3, 2017) – By Feng Zengkun – Head to Singapore’s Nanyang Technological University (NTU) and you might be able to spot Nadine, a cyberhuman with soft skin, brunette hair, and a ready smile. Touted as the world’s most life-like robot, she can not only remember your name and past conversations, but also strike up a chat and even empathize with your troubles.

Over the past few years, scientists across Asia have unveiled a series of humanoid robots that could one day help to look after our children, keep grandma and grandpa company and give directions to people in public spaces such as museums and malls. Aside from Nadine, there is also JiaJia, a female robot created by Chinese researchers, and Geminoid, a male robot developed by renowned Japanese roboticist Hiroshi Ishiguro (What Humanoids Can Teach Us About Being Human).

These social robots—so-called because they are meant to interact with people—could help to alleviate the lack of caregivers in aging societies such as Singapore, Japan, China and South Korea, and their limitless patience could also be useful in therapy for children with learning problems and the elderly who suffer from dementia.

Although the need is undoubtedly great, are robots currently up to the task? And even if they were, should machines replace human companions and caregivers?

The complexity of a chat

“Robots are highly integrated systems with many sophisticated technologies, but it is still really difficult for them to understand human beings,” said Dr. Takayuki Kanda, a senior research scientist at the ATR Intelligent Robotics and Communication Laboratories in Kyoto, Japan.

Not unlike people, robots rely on sensors, such as cameras for eyes and microphones for ears, to recognize images and sounds, and then process the information to react accordingly. However, while they can be programmed to learn to identify people, objects and words, they remain ill-equipped to detect nuances in human interactions, which range from sarcasm to passive-aggressiveness and subtext.

Most people instinctively pick up on a multitude of subtle cues, such as body language, facial expressions and tone of voice, to determine other people’s moods and feelings. Robots are currently unable to replicate such seamless collection and integration of cues, which means that they are usually unable to tell if a person is being sarcastic or sincere.

There are other technical challenges. Like people, robots’ microphone-ears can be distracted by surrounding noises, and their camera-eyes derailed by bad lighting, occlusion and environmental obstructions, for example, if it is raining. While people are good at recognizing other people in unusual positions—such as elderly people who are hunched over—robots might be flummoxed by such variations.

Another challenge for robots is in parsing overlapping conversations. Professor Nadia Thalmann from NTU, who is Nadine’s creator, model and namesake, said, “When there are many people around Nadine, she has to decide who to look at, who to listen to, and, if there’s a discussion, when to speak and why. Research on multi-party interactions is very complex. Once, we had about 20 people around Nadine and she was completely confused.”

Also, while researchers have made several achievements in physical human-to-robot interactions, the settings of the investigated scenarios are still too simple—such as just shaking hands—to be realistic enough for real-world applications, said other scientists. Robots need more physical and emotional intelligence if they are to care for the elderly or children.

Becoming human

Research institutes and companies across Asia are looking into solutions for these challenges. One widely-used method is to collect massive amounts of data and use it to ‘teach’ the robots.

One success story so far is Xiaoice, an artificially-intelligent software program designed by Microsoft’s Applications and Services Group East Asia to chat with people online (The AI Spring Is Coming). Modeled after the persona of a 17-year-old Chinese girl, Xiaoice has had more than ten billion conversations with people, with many of them not realizing she wasn’t human until ten minutes into the chat, according to her creators.

But big data is no silver bullet. When Microsoft launched a similar chatbot called Tay in March 2016, she had to be taken down in just 16 hours, having evolved into an expletive and hate-spewing Twitter stream. Unlike Xiaoice, Tay had somehow not managed to learn the social rules of engagement from its analysis of Twitter data. Instead, she merely reflected the very worst of human behavior, becoming like the racist trolls that haunt the underbelly of the internet.

All this suggests that robots need some guidance in their journey to becoming more human. In 2007, the government of South Korea said that it was drawing up a Robot Ethics Charter to prevent social ills from the use of robots. While there have been no updates, researchers said at the time that it could include guidelines that robots must not deceive or hurt people, and that their users’ personal details and other sensitive information must be kept confidential.

Nonetheless, ATR laboratories’ Kanda is optimistic that the technological challenges and ethical concerns can be resolved, and that similar issues had been raised with existing technologies such as smartphones and computers.

“In many of the domains, the problems can be gradually solved, going by recent trends in computer science. We’ll need collaboration among scientists from many disciplines, but I’m personally rather optimistic when it comes to communication robots,” he said.

This article was first published in the print version of Asian Scientist Magazine, January 2017. Click here to subscribe to Asian Scientist Magazine in print.

———

Copyright: Asian Scientist Magazine.

Disclaimer: This article does not necessarily reflect the views of AsianScientist or its staff.